Embedding Human Judgment in Scalable AI Workforce Systems (2026)

A practical framework for HR and workforce planning leaders to govern AI hiring, skills validation, and talent decisions

By filling up this form, you agree to allow Draup to share this data with our affiliates, subsidiaries and third parties

Why Workforce Planning Leaders Must Govern AI, Not Just Use It

Most workforce leaders don't have a technology problem. They have a judgment problem.

As workforce planning teams deploy increasingly sophisticated AI systems to sense labor markets, model workforce scenarios, and evaluate talent, a critical gap has emerged: the ability to work productively with intelligent machines while preserving human judgment, agency, and accountability.

The real risk isn't that AI will occasionally be wrong, it's that leaders will stop interrogating it or delay action while waiting for false certainty.

This requires a deliberate set of capabilities, not technical skills, but root skills for working effectively with machines. These are the verification skills that enable leaders to interrogate AI outputs, recognize their limitations, and act with confidence even under uncertainty.

This framework shows how to define and develop the 8 verification skills that matter most, evaluate platforms that embed verification into workflows, and build ROI models that quantify judgment quality.

What Are Root Skills for AI-Enabled HR and Talent Teams?

Verification skills are foundational human capabilities that enable SWP and TA leaders to work effectively with AI systems while maintaining accountability, judgment quality, and organizational agency.

Key distinction:

Unlike technical skills (prompt engineering, API integration), verification skills are cognitive and strategic capabilities that govern when to trust AI, when to challenge it, and how to act decisively under uncertainty.

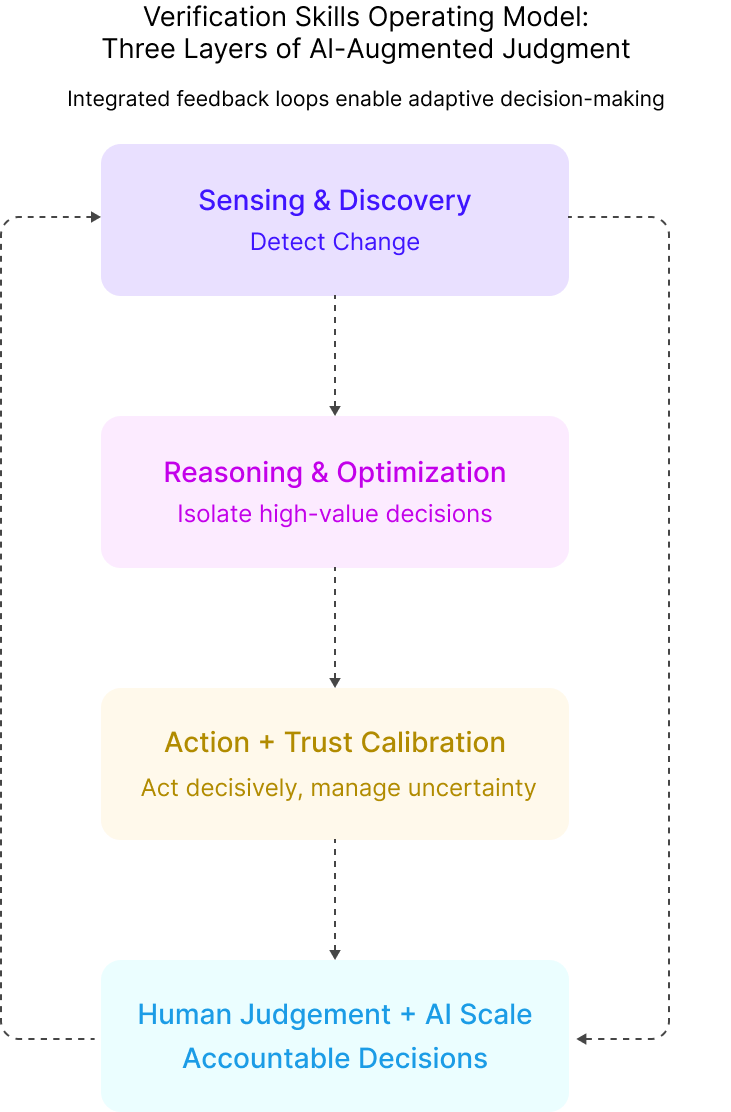

Verification skills operate at three levels:

What verification skills ARE:

Leadership capabilities embedded into daily SWP and TA workflows, the mechanism through which organizations retain agency while moving faster under uncertainty.

What they are NOT:

Compliance checkboxes, post-hoc audits, or technical controls that treat AI as inherently untrustworthy.

Why Skills Validation and AI Governance Matter in 2026

Three converging forces create urgency:

Skills-based hiring adoption grew from 40% in 2020 to 60% in 2024. Soft. Oasis 74% of HR leaders believe organizations are moving to skills-based approaches. But only 2% apply it across all talent processes, an execution gap driven by lack of systematic validation. Draup

Traditional workforce planning assets, job descriptions, organizational ratios, historical time-to-fill, are becoming obsolete as AI reshapes roles. Without continuous verification of workforce intelligence, SWP leaders risk building strategic plans on quicksand.

For Boards and Executive Teams:

The competitive question is no longer ‘Do we have AI tools?’ but ‘Is our organization structured to make high-quality decisions when the AI model is uncertain?’ Verification capabilities are what separate organizations that scale AI effectively from those that scale AI errors.

The 8 Verification Skills Framework

Drawing from research in AI governance and workforce transformation, we’ve identified eight root skills that matter most for SWP and TA leaders. Each skill directly addresses a specific failure mode in AI-augmented workforce decisions.

Discovery

What It Is:

Deeply understanding enterprise changes through telescopic (industry-level) and microscopic (team-level) lenses to detect shifts before they invalidate planning assumptions.

Why It's Important:

Workforce assets (JDs, time-to-fill benchmarks, org structures) are obsolete faster than before. Organizations lacking discovery rely on stale data and build plans that don't reflect reality.

Where It Delivers Value:

- Detect role evolution before hiring failures (TA discovering mobile-first customer success roles adjusts screening criteria, reducing mis-hires 20-30%)

- Compress research cycles from 4+ hours/week to <1 hour by leveraging continuous market sensing

- Anticipate skill obsolescence before workforce plans fail

KPIs it should generate:

- Research time per role (<1hr)

- Quality-of-hire lift (+15-20%)

- Time-to-fill reduction (26% faster with AI tools)

Resolve

What It Is:

Translating uncomfortable workforce insights into credible action. “Pessimism of the intellect, optimism of the will”, accepting uncomfortable truths while acting decisively.

Why It's Important:

Leaders without resolve either deny bad data (hiring for obsolete roles) or freeze while waiting for certainty. Both destroy momentum and competitive position.

Where It Delivers Value:

- Act on automation findings despite constraints (40% task automation = multi-year attrition-based transition plan)

- Execute difficult hiring pivots (talent shortage = build-vs.-buy reskilling strategy)

- Compress planning cycles from 8-12 weeks to 2-4 weeks by accepting imperfect information

KPIs it should generate:

- Insight-to-decision time (<2 weeks)

- Stakeholder confidence (+40%)

- Plan execution rate (85%+)

Constrained Optimization Thinking

What It Is:

Systematically evaluating build-vs.-buy and automation-vs.-augmentation trade-offs to maximize outcomes within financial/operational constraints.

Why It's Important:

Without it, leaders default to “hire more” or “pay more” without evaluating whether building, redeploying, or redesigning is cheaper and better.

Where It Delivers Value:

- Build-vs.-buy ML talent: Internal development 40% cheaper than external hiring with higher retention

- Optimize hiring geography: Secondary markets + hybrid deliver same talent at 30-40% lower cost

- Reskilling vs. hiring: Adjacent hire + upskilling delivers faster hiring at lower total cost than specialization

KPIs it should generate:

- Cost-per-hire reduction (15-20%)

- Internal development ROI (62% average HR project ROI vs. 41% firm average)

- Reskilling time-to-proficiency (20-40% reduction vs. baseline)

Polymath Thinking

What It Is:

Integrating insights from finance, technology, behavioral science, and communication into workforce decisions that address enterprise complexity.

Why It's Important:

Workforce challenges sit at intersections of strategy, finance, technology, and human behavior. Single-lens thinking produces technically sound but organizationally misaligned strategies.

Where It Delivers Value:

- Write business cases that win funding (TCO modeling + storytelling + stakeholder psychology = board approval)

- Design mobility programs that feel human-centered while delivering business outcomes

- Translate skills data for different audiences (CFO ROI language, engineers technical accuracy, employees career opportunity)

KPIs it should generate:

- Business case approval rate (45% baseline; strong cases achieve 85%+ approval)

- Executive alignment for workforce strategy

- Mobility program awareness and engagement lift

Experimentation

What It Is:

Testing workforce hypotheses through controlled pilots and scenario modeling before scaling. Value emerges through iteration, not one-time decisions.

Why It's Important:

Without experimentation, organizations either avoid AI adoption (paralyzed by risk) or deploy untested systems at scale (amplifying errors).

Where It Delivers Value:

- AI screening pilots on controlled candidate sets measure false positives/negatives before enterprise rollout

- Workforce scenario simulations model automation impact (baseline, moderate, aggressive) to pressure-test plans

- A/B test hiring strategies (AI screening vs. human-first) to validate improvement before scaling

KPIs it should generate:

- False positive/negative rates (<10%, <15%)

- Time-to-fill improvement (26% faster with AI tools vs. manual processes)

- Pilot-to-production accuracy validation

Inorganic Augmentation

What It Is:

Embeddingintelligent agents into workflows so sensing, reasoning, and action occurcontinuously. Agents handle detection; humans retain judgment.

Why It's Important:

Manual sensing and human-scale patterndetection miss weak signals, delay action, and waste capacity on repetitivetriage.

Where It Delivers Value:

- Continuous labor market monitoring triggers action plans when thresholds hit (wage spike → reskilling recommendations)

- Automated resume screening with human oversight gates reduces bias while increasing throughput (AI-assisted screening reduces false positives by 37.3%)

- Real-time skills inventory updates (no manual data entry) keep planning data fresh

KPIs it should generate:

- Signal detection latency (<24hrs)

- Screening throughput improvement with bias reduction (37.3% fewer false positives with AI)

- Data freshness (<24hrs old)

- Adoption rate (70%+)

Asymmetrical Pattern Detection

What It Is:

Identifying where small subsets of causes drive outsized outcomes, then isolating high-leverage interventions.

Why It's Important:

Not all skills, roles, or workloads matter equally. Without asymmetric thinking, leaders dilute resources on low-impact optimizations and miss structural gains.

Where It Delivers Value:

- Isolate 5 critical skills driving 70% of time-to-fill delays (focus reskilling/automation on these, not all 500 skills)

- Identify handful of roles creating bottlenecks (alternative talent pools, internal mobility, non-traditional credentialing)

- Detect which outsourced workloads drive disproportionate cost/risk (candidate for redesign/automation)

KPIs it should generate:

- High-impact skill identification (time-to-fill reduction 50%+ for targeted skills)

- Hiring efficiency lift for focus roles

- Cost avoidance from workload redesign

Trust-Forward Thinking

What It Is:

Working effectively with imperfect AI while maintaining momentum. Occasional errors are inevitable; the focus is improving decision quality over time.

Why It's Important:

Without trust-forward thinking, organizations fall into analysis paralysis (over-verifying) or skepticism (avoiding AI), both slow hiring and freeze plans.

Where It Delivers Value:

- Accept AI screening requires calibration; focus on reducing bias and improving accuracy iteratively, not eliminating all errors

- Use AI forecasts to guide planning even when signals are noisy; refine models dynamically rather than waiting for perfect certainty

- Validate high-impact decisions (not every data point) through human judgment, enabling speed without abandoning oversigh

KPIs it should generate:

- Time-to-hire (global average 44 days; target reduction to 20 days with AI + processes)

- Model accuracy improvement per iteration cycle

- Decision velocity (time from AI recommendation to action)

How to Evaluate AI Workforce Planning Platforms: 6 Key Criteria

Evaluate platforms as judgment infrastructure, not just data tools. Ask: Does this platform help our teams develop and exercise verification skills at scale?

Six Critical Evaluation Dimensions

Data Provenance & Continuous Verification

Test how often labor market and workforcedata refreshes, whether timestamps/sources are visible, and if outdatedinformation is flagged. Workforce data decays rapidly; verification-enabledplatforms treat freshness as core capability. PrismForce

Red flags:

"Real-time"without specific refresh SLAs; no visibility into when data was last verified.

Skills Decomposition & Task-Level Intelligence

Test whether platforms decompose jobs intotasks, map those to specific skills, and track skill emergence/decline byfunction and industry. Without task-level granularity, leaders can't answer:"Which tasks should be automated? Which require human judgment?"

Red flags:

Statictaxonomies; no visibility into how tasks within roles are changing.

Explainable Prioritization & Reason Codes

Test whether prioritization scores include transparent reason codes and customizable weights. If platforms can't explain "why this candidate," users will ignore recommendations.

Red flags:

"AI score" without explanation; no customization options; scores that don't update when conditions change.

Workflow Embedding & Agentic Integration

Test whether platforms push insights into existing workflows (ATS, HRIS, Slack) and trigger actions automatically based on rules. If users must "go check the platform," adoption fails.

Red flags:

Standalone app requiring separate login; no API support; "integration" means manual CSV exports.

Governance, Bias Detection & Audit Trails

Test whether platforms provide audit logsshowing who made decisions, when, and based on what AI recommendations. AIsystems can perpetuate bias without systematic governance. Jobillico

Red flags:

No bias detection metrics; vague"ethical AI" claims without documentation.

ScenarioModeling & Experimentation Support

Testwhether platforms simulate multiple workforce scenarios and allow"what-if" modeling for build-vs.-buy decisions. Experimentationenables leaders to verify AI recommendations produce better outcomes thanbaseline.

Red flags:

Static reporting; no ability to modelalternative futures; scenario modeling requires professional services.

The 4 Highest-Value Use Cases to Improve AI Hiring Accuracy and Workforce Planning ROI

Skills Validation for AI-Powered Talent Acquisition

The Problem:

AI resume screening rejects 38% ofqualified candidates before human review. Verity.ai Without skills validation, hiring teamscan't distinguish signal from noise.

Why It Matters:

85% of employers use skills-based hiring, Test Gorillabut validating skills at scale iscritical to reducing false positives/negatives and improving quality-of-hire.

How Draup Addresses This:

Draup's Talent Acquisition platform deconstructs roles into tasks and skills, thenvalidates candidate profiles against task-level requirements using 360°profiles from 850M+ professionals. The platform surfaces skilladjacencies, enables blind hiring mode to reduce bias, and providesaudit trails for every decision. Draup

Verification Skills Applied:

Discovery(skills that predict success vs. proxies), Experimentation (pilot AI screeningon controlled sets), Trust-Forward Thinking (iterative calibration)

KPIs:

- False positive/negative rates (<10%, <15%)

- Time-to-fill (26% faster with AI tools)

- Quality-of-hire (+15-20%)

- Bias metrics (demographic parity in screening)

Ensuring Skills Data Accuracy for Strategic Workforce Planning

The Problem:

Organizations plan workforce using outdated org charts and static job descriptions. As roles evolve, these assets become liabilities. Draup

Why It Matters:

85% of employers use skills-based hiring, Test GorPlans grounded in outdated data don't survive market shifts. Organizations need continuous intelligence on talent supply, skill adjacencies, and emerging skills to enable dynamic planning. Draup

How Draup Addresses This:

Draup's Talent Acquisition platform deconstructs roles into tasks and skills, thenvalDraup's Strategic Workforce Planning platform refreshes talent and demand data daily from 75,000+ sources, enabling scenario modeling at the workload level, automation impact analysis, and competitor benchmarking. Draup Full audit trails and human-in-the-loop reviews reduce bias. Draup

Verification Skills Applied:

Constrained Optimization (build-vs.-buy trade-offs), Asymmetric Pattern Detection (isolating critical high-impact skills), Resolve (translating uncomfortable findings into action)

KPIs:

- Workforce plan accuracy (±10% vs. ±30% baseline)

- Scenario modeling cycle time (weeks → days)

- Cost avoidance from optimization

- ROI: 30–40% faster planning cycles, 25% fewer skill mismatches - Draup

Governing Skills Verification Across HR Tech Stack

The Problem:

Organizations deploying multiple AI tools across ATS, HRIS, and LMS lack unified governance.Data inconsistencies proliferate; audit trails don't exist; bias detection isad hoc.

Why It Matters:

Boards and regulators scrutinize AI governance in workforce decisions. SOC 2, GDPR,and ISO 27001 compliance require audit trails, access controls, and biasdetection.Draup

How Draup Addresses This:

Draup maintains a centralized skills architecture unifying internal role data with 850M+ profiles. Draup The platform combines automated evaluations with human-in-the-loop reviews, reducing demographic bias. SOC 2, GDPR, ISO 27001-compliant with full audit trails and role-based access controls. Draup

Verification Skills Applied:

Trust-Forward Thinking (clear thresholds for automation vs. human review), Experimentation (testing governance on non-critical decisions first)

KPIs:

- Audit trail completeness (100% of decisions logged)

- Bias detection (<2% demographic disparity)

- Skills definition consistency

- Compliance query resolution (<5 business days)

Reducing AI False Positives/Negatives in Candidate Screening

The Problem:

False negatives (qualified candidates wrongly rejected) limit sourcing reach and reduce diversity. 38% of qualified candidates are rejected before human review.

Why It Matters:

False positives and negatives both erode AI hiring tool ROI. Hiring managers lose confidence when quality-of-hire declines; recruiters abandon the platform for manual screening.

How Draup Addresses This:

Draup's platform scores candidate fit at the task level (not just keywords), using 360° profiles validated against job descriptions. A/B testing on controlled cohorts measures false positive/negative rates before scaling. Blind hiring mode reduces bias; every decision is logged for continuous refinement. Draup

Verification Skills Applied:

Experimentation (controlled testing before scaling), Trust-Forward Thinking (iterative calibration), Asymmetric Pattern Detection (identifying roles/segments with most errors)

KPIs:

- False positive/negative rates (<10%, <15%)

- Time-to-fill (26% faster)

- Quality-of-hire (+15-20%)

- Bias metrics (demographic parity)

- Offer acceptance rate (+15%)

Howto Measure ROI for AI in Talent Acquisition and Workforce Planning

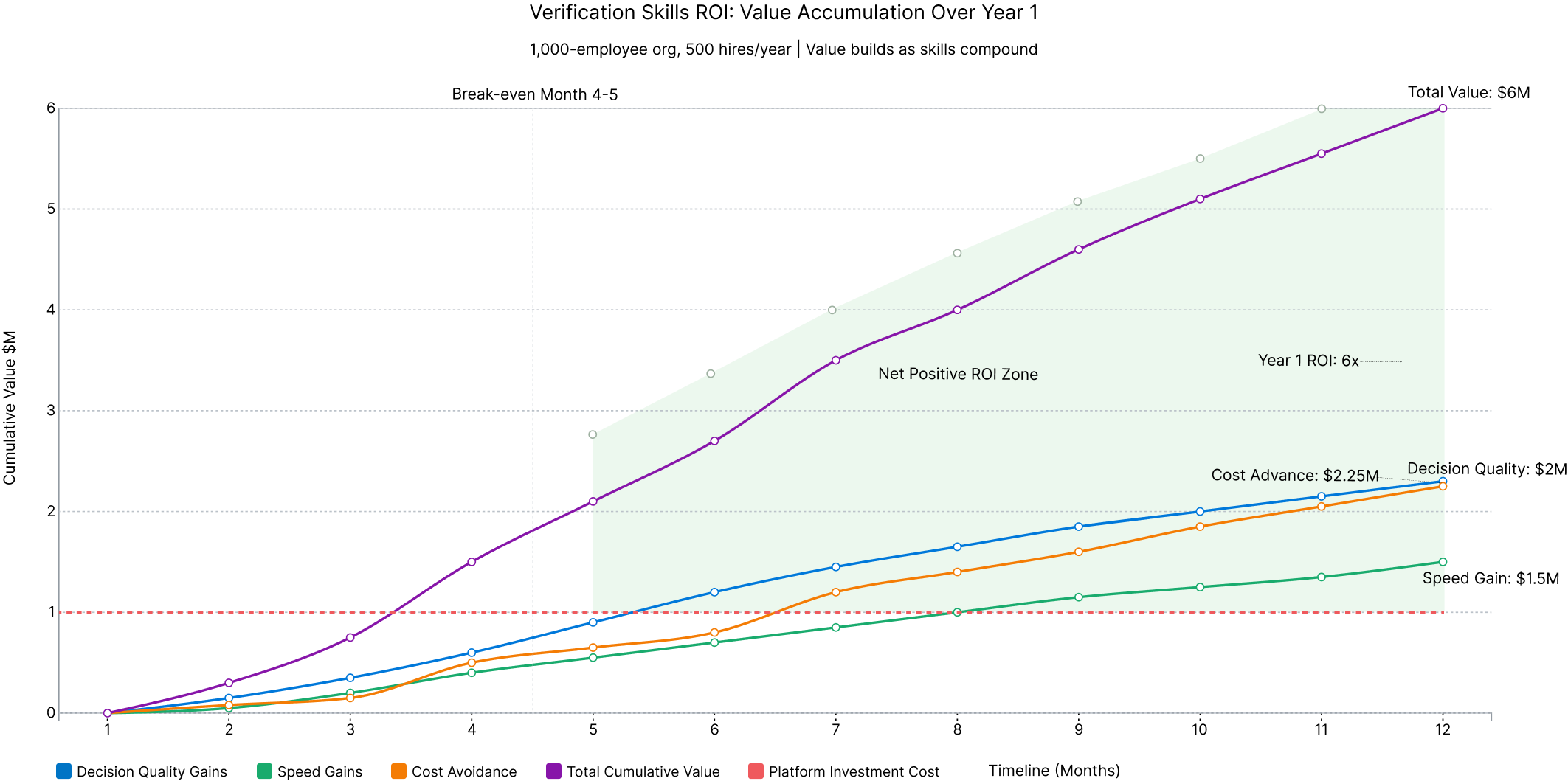

Verification ROI = (Decision QualityImprovement + Speed Gains + Cost Avoidance) ÷ Platform + Training Investment

Organizations without verificationexperience 38% of qualified candidates rejected by AI before human review Verity.ai, and 56% struggle with false positive/negativerates. Second Talent Each mis-hire costs 50-75% of annualcompensation in replacement costs. Draup

Example: 500hires/year. AI without verification creates 10% higher mis-hire rate = 50mis-hires × $75K replacement cost = $3.75M annual cost. Verification reducesfalse negatives to <15%, cutting mis-hires by 60%. Value:$2.25M/year.

Every day a revenue-generating role staysopen costs daily revenue contribution. Draup Verification-enabled platforms reduce workforce planning cycles from 4 weeks to 1 week.

Example: For 50 critical hires, 3-week acceleration = 15 days faster per hire × $400/dayfully loaded cost = $300K value.

Agency fees range $10K-$60K per hire. Draup Verification-enabled internal mobility increasesinternal fill rates.

Example: 500hires/year, internal fill rate increases from 20% to 35%. 75 additionalinternal hires × $30K avoided external cost = $2.25M annual savings.

How Draup Supports Human Judgment in AI-Powered Workforce Decisions

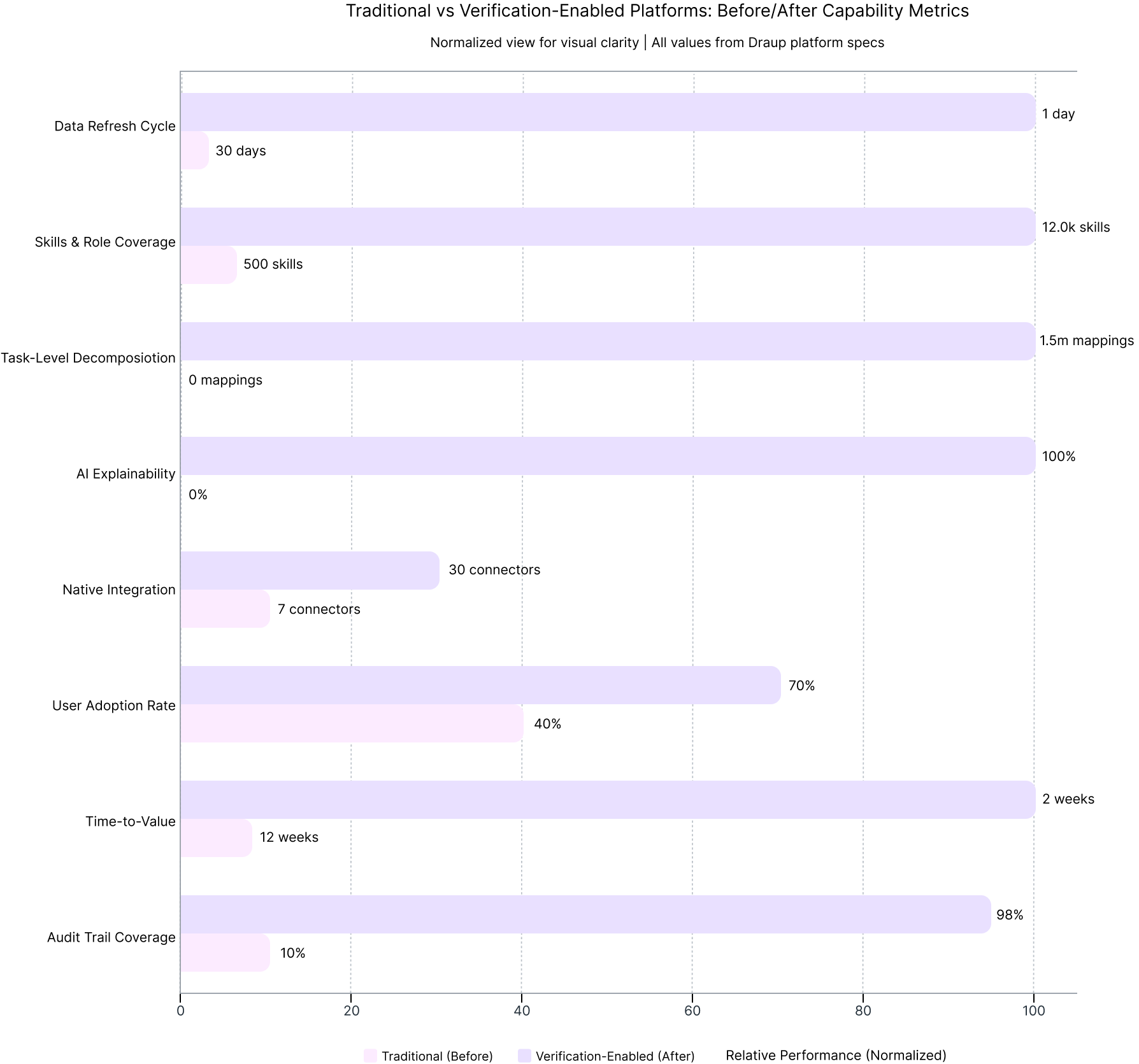

We built our platform to support verification capabilities: continuous data refresh across 12K+ skills and 850M+ professionals with visible timestamps and confidence scoring; task-level skills decomposition tracking emergence/decline across 33+ industries; explainable prioritization with transparent reason codes and customizable weights; native integration with 30+ ATS/HRIS platforms; SOC 2/GDPR/ISO 27001 compliance with full audit trails and bias detection; scenario modeling and A/B testing support.

Our methodology is informed by work with Fortune 5 out of 10 CHROs and CFOs who demand the same rigor for human capital decisions as financial modeling.

What This Means for Workforce Leadership

Verification skills are a permanent leadership capability in a world where uncertainty is structural and machine-generated insight is abundant. Organizations that institutionalize verification move faster with confidence, adapt without losing control, and maintain credibility with stakeholders.

In the AI age, competitive advantage belongs to enterprises that preserve human judgment while scaling machine intelligence, not by resisting AI, but by building verification capabilities that make AI-human collaboration systematically effective.

FAQ: Verification Skills Questions

You Should Ask

What are verification skills?

Foundational human capabilities, discovery, resolve, constrained optimization, polymath thinking, experimentation, inorganic augmentation, asymmetric pattern detection, trust-forward thinking, that enable leaders to work with AI without ceding judgment.

How do we know if we need verification capabilities?

If you struggle with skills validation (62% of HR professionals do) Soft. Oasis, AI rejects qualified candidates (38% false negative rate) Verity.ai, workforce data is outdated causing misallocated budgets Prism Force, or leadership lacks confidence in AI recommendations, you need verification.

What's the biggest risk if we don't invest?

Ceding agency to AI without realizing it. Organizations fall into analysis paralysis (distrusting AI, over-verifying, slowing decisions) or over-trust (accepting AI recommendations without interrogation, scaling errors). Both erode judgment quality and competitive advantage.

Can verification skills be trained?

Yes. Through structured training on the 8-skill framework, experimentation/pilots that create safe learning environments, embedding verification checkpoints into workflows, and peer coaching. Organizations see measurable improvements in decision quality within 60-90 days.

.svg)

.svg)

.svg)

.svg)