Mapping the Evolving AI Tech StackHow Roles Interact Across the Modern AI Ecosystem

Introduction

The current tech stack shift is structural. Gen AI and cloud-native infrastructure are changing how work is divided across the functions of Software Engineering, IT, and Data Science. Instead of operating through sequential handoffs, these functions are becoming interdependent—with shared accountability for production reliability, security, data readiness, and continuous iteration.

In our latest BrainDesk report, we find that this is driving role convergence and the emergence of more hybrid profiles. For example, software teams are embedding ML into products, data teams are taking on more pipeline/production responsibilities, and IT is expanding into orchestrating secure AI-ready environments. Hiring signals reinforce this shift, with AI skill requirements rising across job demand.

KEY DATA

- Demand for AI-based skills in software jobs surged by ~50% from Q3’23 to Q2’25 due to broader AI-related hiring growth.

- The share of data science job postings requiring LLM / open-source framework experience rose from 9% (Q3’23) to 16.6% (Q2’25)—an ~80% increase over the period, reinforcing that prompt-centric workflows and faster iteration are becoming baseline expectations.

AI in Software EngineeringDemand Rising (US vs Global)

Tracking the share of software engineering job postings that include AI-related skills over time (split across the U.S. and global markets), we find that the U.S. baseline is consistently higher than global standards.

There's also a slight acceleration post-Q2’24 as AI skills become expected across a larger proportion of functions rather than being restricted to explicitly titled AI roles. Demand for AI-based skills in software jobs surged by ~50% from Q3’23 to Q2’25, driven by broader AI-related hiring growth.

Share of AI related Tools / Skills in Software Development Job Postings (Jul’23 – Jun’25) – Global vs USA Comparison

Note: We have considered only software developer workload-related job roles & filtered the AI-related skills to identify the relevant skills. We’ve analysed a total of 4.9 million global job postings and 1.4 million US job postings.

Core Languages Stay DominantAI-Adjacent Skills Rise

In the skills distribution, as shown in the graph below, we see that foundational languages still dominate demand. Python and Java account for a total of 87% of the job offers in U.S. software postings across the last year’s window.

Cloud and DevOps tooling remains structurally important (like in cloud deployment and orchestration), while AI-related assistants and AI-augmented development appear as a distinct, meaningful slice—signaling that enterprises are hiring for builders who can ‘ship with AI,’ not just builders who can code.

Penetration of Tools Languages & Models in Software Job Postings USA (Jul’24-Jul’25)

Note: Since multiple skills can appear simultaneously within a single job description, the skills overlap across different JDs. Therefore, the total will not sum up to 100%

Mainstream

AI HiringLate-2024 Search Signals

IT follows the same pattern as software, but at lower overall penetration: U.S. postings move from 7.0% to 10.35% AI-skill share between Q3’23 and Q2’25. This marks approximately a 48% rise in less than 2 years.

The most notable change is the increase around Q4’24. This is consistent with enterprises who’re operationalizing AI within IT service delivery, automation, and security workflows rather than limiting it to experimentation.

Share of AI Skills in IT Job Postings (Jul’23 – Jun’25) – Global vs USA Comparison

Note: We have considered only IT workload-related job roles & filtered the AI-related skills to identify the relevant skills.

Modern

IT PrioritiesDigital Transformation Tech Stack

The bar distribution below shows cloud deployment skills as the most prevalent requirements in U.S. IT postings, alongside orchestration and IaC tools.

We see observability tooling and security posture/zero-trust concepts also showing material demand, reinforcing that modern IT roles are increasingly engineering roles responsible for automated, governed environments—not just ticket-based support.

Penetration of Core IT, Tools / Libraries in IT related Job Postings USA (Jul’24-Jul’25)

Note: Since multiple skills can appear simultaneously within a single job description, the skills overlap across different JDs. Therefore, the total will not sum up to 100%

Data Science Goes LMM-FirstLLM & Open Framework Demand Accelerates

We’re seeing a growth of 80% in the share of job postings demanding experience in LLM and Open Source Frameworks over the past two years – as shown in the graph below.

Low-code, AutoML and prompt-centric workflows reinforce a practical point for workforce strategy: the center of gravity is moving toward faster iteration, model operationalization, and natural-language-driven workflows—changing what productivity looks like in data roles.

Increased Demand of LLMs in Data Science Job Postings past two years (2023-2025)

Note: The occupation titles, on which the analysis has been done, are broad in nature and not limited to any particular industry.

The New Delivery ModelShared Rhythm Over Handoffs

Organizations are moving from siloed development and reactive IT towards integrated, cloud-native ecosystems. Software and IT teams leverage security, automation, and AI/ML to speed up delivery, optimize infrastructure, and boost resilience.

Traditional workloads

Software

Traditional software development was siloed, manual, and prone to delays due to isolated coding, late IT involvement, and minimal automation.

Example

IT teams managed physical data centers and resolved outages, often without visibility into upcoming software needs.

IT

IT teams operated in static, manually managed environments, while reacting to issues after they occurred. This made them remain disconnected from the development process.

Example

Developers created new product features and then handed them over to IT without aligning on capacity planning, runtime dependencies, or performance implications.

These shifts can be seen as a direct driver of role redesign. Hiring and internal mobility will increasingly target people who can operate in shared pipelines and understand upstream and downstream constraints.

Evolving Interdependencies - Integrated Workloads

Syncing for Production AIMLOps + Data Infra + Monitoring

Traditional workloads

Data Science

It’s traditionally reporting-driven and research-heavy. Focusing on insights and prototype models rather than scalable, production-ready solutions.

Example

A data scientist might deliver a detailed trend analysis or a high-accuracy prototype model, but it remains confined to reports or test environments without integration into business operations.

IT

Traditional IT in data science played only a supporting role, lacking collaboration, tools, and processes to manage or scale machine learning models effectively.

Example

IT provisioned Hadoop clusters or on-premise servers, but these were managed separately from data science activities.

Data Science and IT now operate in sync. IT delivers scalable infrastructure, security, and deployment pipelines while data scientists refine and improve models in real time. This collaboration enables enterprise-wide, production-ready AI.

Current Interdependencies - Integrated Workloads

One Operating ModelShared Ownership Takes Hold

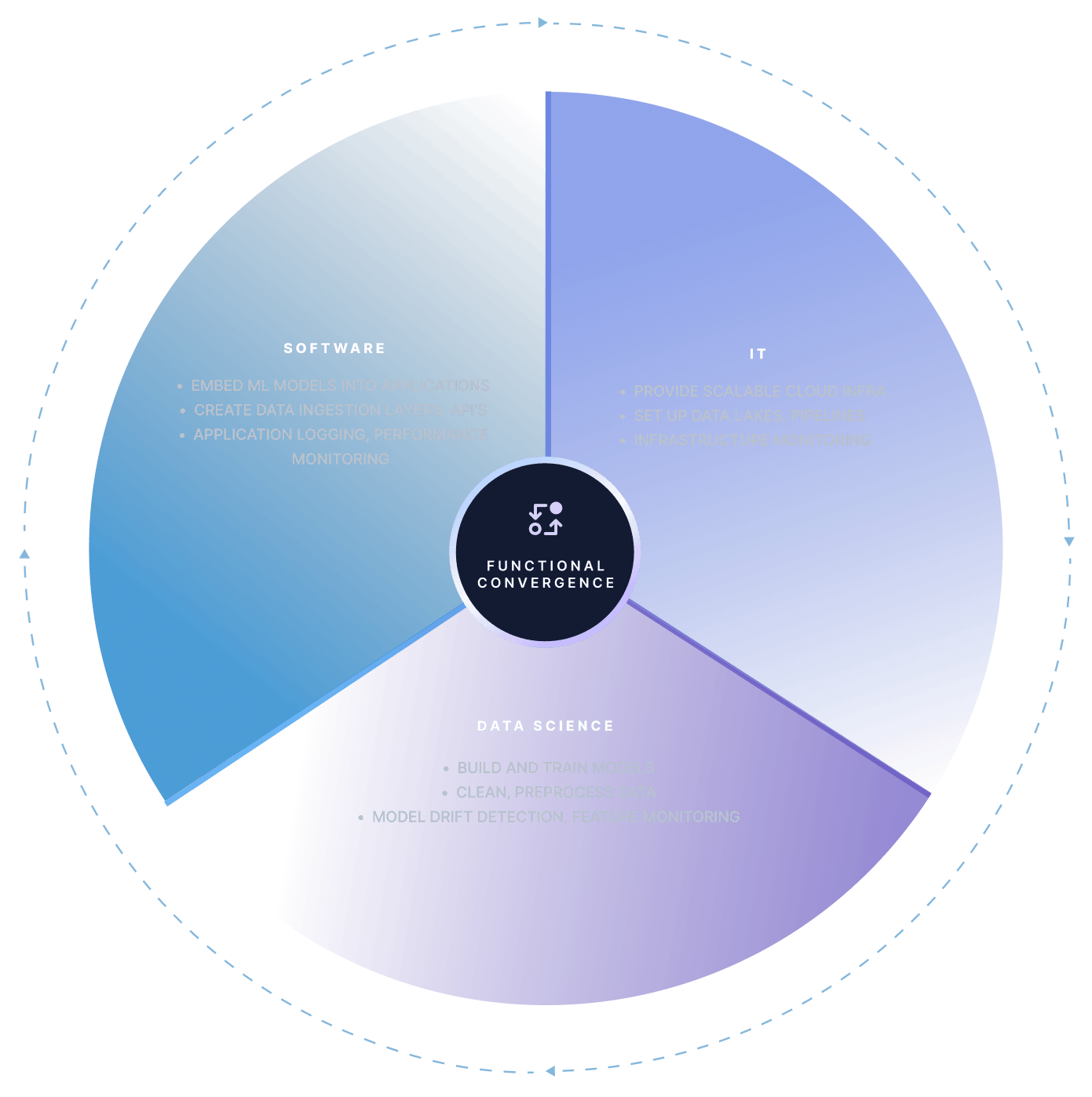

Traditionally separate roles in software, IT, and data science now integrate closely through shared responsibilities. This enables faster innovation, seamless experimentation, and stronger compliance.

In the diagram below, we show the functional convergence of these three roles.

Scaling Hybrid Delivery Platform Engineering & Shared Infrastructure

Platform engineering is becoming a critical enabler for scaling modern workloads by building shared infrastructure, standardizing environments, and fostering seamless cross-team collaboration. In the end, this will drive faster deployments, reduced complexity, and greater organizational agility.

Evolving Workloads

Complex Cloud-Native Ecosystems

Microservices, containers, and multi-cloud setups have increased operational complexity.

Al & ML Integration

Software and data science teams now require scalable GPU and infra pipelines, adding new layers of dependency.

Growing Cross-Team Interdependencies

Development, IT, and data science teams are increasingly interconnected, requiring tighter alignment to deliver at scale.

LimitationsOf Current Approach

DevOps at Scale Fails: Tool sprawl and inconsistent processes make scaling hard

Ticket-Driven IT Support: Slows down developers and introduces bottlenecks

Lack of Standardization: Each team builds its own workflows, causing governance and cost issues

Expansion Of

Platform Engineering

Siloed

Approach

- Separate tools for IT, Data Science & Software

- Manual infrastructure setup by IT

- Slow deployment cycles & misaligned workflows

Engineering Expansion

- Builds shared infrastructure & pipelines

- Standardizes environments (cloud, CI/CD, MLOps)

- Enables cross-team access & collaboration

Integrated Workflow

- Faster, automated deployments

- Seamless integration of ML models into applications

- Strong collaboration across teams

A unified MLOps platform allows data scientists to train models, IT to manage scalable infrastructure, and software engineers to integrate models into apps effortlessly.

The Path ForwardStandardize platforms, reskill teams, and industrialize hybrid delivery

Across software, IT, and data science, we see the same structural shift: AI is increasing the speed of execution while cloud-native delivery increases the need for shared standards, governance, and integrated pipelines.

That combination drives role convergence and makes hybrid capability (AI + cloud + automation + lifecycle ownership) a mainstream requirement rather than a specialist edge. For large enterprises, the near-term risk is not only external scarcity—it is internal fragmentation, where teams adopt tools faster than they can build consistent ways of working.

The actionable response is workforce architecture, not isolated training. We should treat platform engineering, MLOps, IaC, and observability as “connective tissue” skills and invest in repeatable pathways that bridge legacy job families. Hiring will still matter, but the scalable advantage will come from internal mobility, stack standardization, and competency models that recognize AI-assisted work as the new default operating mode.